EU AI Act: What Businesses Must Know

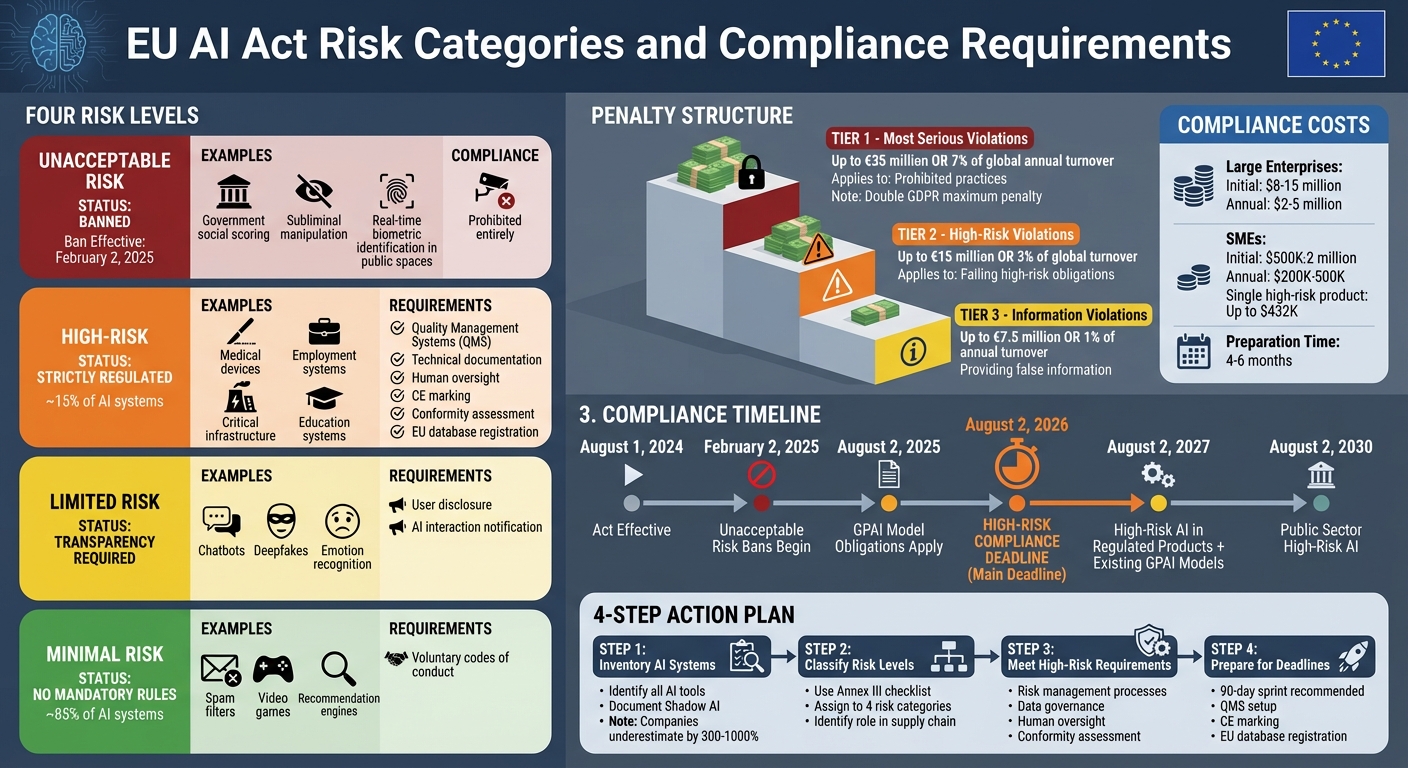

The EU AI Act is a groundbreaking regulation governing artificial intelligence in the European Union. Effective since August 1, 2024, it introduces strict rules for AI systems based on their risk level. Companies must comply by August 2, 2026, especially for high-risk AI systems, or face fines up to $35 million or 7% of global annual revenue - double the maximum penalty under GDPR.

Key Points:

- Risk Levels: AI is classified into 4 categories - Unacceptable (banned), High-Risk (strictly regulated), Limited Risk (transparency required), and Minimal Risk (no major rules).

- Who Must Comply: Any business offering AI in the EU or using AI outputs within the EU, regardless of where the company is based.

- High-Risk Requirements: Includes conformity assessments, technical documentation, human oversight, and CE marking.

- Penalties: Up to $35M or 7% of global turnover for serious violations.

Action Plan:

- Inventory AI Systems: Identify and classify all AI tools used.

- Classify Risk Levels: Determine each system’s risk category.

- Meet High-Risk Requirements: Implement risk management, human oversight, and technical documentation.

- Prepare for Deadlines: Ensure compliance by August 2, 2026.

Companies must act quickly, as compliance can take 4–6 months and costs can range from $500K to $15M, depending on business size. Non-compliance risks steep penalties and market restrictions.

EU AI Act Risk Categories and Compliance Requirements

What the EU AI Act Requires: Risk Categories Explained

The Four Risk Levels and What They Mean

The EU AI Act divides AI systems into four risk levels, each with specific rules to follow. At the top are Unacceptable Risk systems, which are outright banned. These include government-led social scoring, subliminal manipulation, and most forms of real-time biometric identification in public spaces. The bans on these systems officially began on February 2, 2025.

High-Risk systems are allowed but come with strict regulations. This category includes AI used in critical areas such as medical devices, vehicles, employment, education, and critical infrastructure. To comply, these systems must implement Quality Management Systems (QMS), maintain detailed technical documentation, ensure human oversight, and secure a CE marking before they can be marketed.

Limited Risk AI covers tools like chatbots, deepfakes, and emotion recognition systems. The main requirement for these systems is transparency - users must be informed when they are interacting with AI.

Finally, Minimal Risk AI includes the majority of AI applications (about 85% of them). Examples are spam filters, video games, and recommendation engines. These systems aren't subject to mandatory rules, although the Act encourages voluntary codes of conduct.

Responsibilities for Providers, Deployers, and Distributors

"The AI Act does not regulate AI systems in the abstract - it regulates actors in the AI value chain according to their role and the risks their AI systems present."

Providers, who create or modify AI systems, carry the heaviest responsibilities. They must perform conformity assessments, keep technical documentation up to date, implement QMS, and register high-risk systems in the EU AI database. Providers outside the EU must also appoint an EU representative.

Deployers, or users of AI systems, have duties that include ensuring human oversight, monitoring risks, logging system operations, and reporting any incidents. If a deployer significantly modifies an AI system or markets it under their own brand, they may be reclassified as a provider.

Importers and Distributors are tasked with verifying that providers meet all compliance requirements. They must also ensure that AI systems display the CE marking before they are sold.

Penalties and How They're Enforced

The EU AI Act enforces compliance through a tiered penalty system based on the severity of violations. For the most serious breaches, such as engaging in prohibited practices, fines can reach up to $35 million or 7% of global annual turnover. For failing to meet high-risk obligations, fines can go up to $15 million or 3% of global turnover. Providing false information to regulators can result in penalties of up to $7.5 million or 1% of annual turnover. This tiered system highlights the importance of quick and thorough compliance.

sbb-itb-5f36581

Step 1: Identify and Classify Your AI Systems

Create an Inventory of All AI Systems

The first step to compliance is understanding exactly which AI systems your organization is using. Studies show that businesses often underestimate their AI integrations by 300% to 1,000%. In other words, many companies operate three to ten times more AI tools than they’ve officially documented.

"You cannot document what you have not found."

- Naman Mishra, Co-Founder & CTO, Repello AI

Start by listing every AI system in use. This includes standalone models, AI features embedded in SaaS platforms (like CRM or HR systems), general-purpose AI tools such as large language models (LLMs) and Copilots, and third-party APIs used for tasks like fraud detection or risk scoring. Pay special attention to "Shadow AI" - unauthorized tools like browser extensions or Slack bots. Engage IT, procurement, data science, and business units to uncover all systems.

For each system, document key details: its name, owner, purpose, types of data it processes (including personal or sensitive data), and where it’s used. Map out data flows to track what information goes into the system, how it’s processed, and the outputs it generates. Assign a specific executive to oversee each system, ensuring the documentation stays up to date.

Once your inventory is complete, the next step is to classify these systems by risk level.

Assign Risk Levels to Each System

Using the Act’s four-tier framework, classify each system based on its intended purpose. Focus on what the system is designed to do, as outlined in the provider’s documentation - not on the underlying technology.

"Classification follows intended purpose, not marketing claims. A vendor describing their tool as a 'recruitment assistant' does not determine whether it falls under Annex III."

- Matt Chappell

To identify high-risk systems, use the Annex III checklist. This involves reviewing your tools against eight high-risk domains: biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and justice. Research from appliedAI found that 40% of enterprise AI systems had unclear risk classifications, particularly in employment and critical infrastructure.

If a system is traditional rule-based software and not AI, document your reasoning to show due diligence. Similarly, if an Annex III system doesn’t qualify as high-risk under the Article 6 exception, record your justification and notify the relevant authority.

With your systems classified, the next step is identifying your role in the AI supply chain.

Identify Your Role in the AI Supply Chain

For every AI system in your inventory, determine your role in the supply chain. Are you a Provider (developing the system or marketing it under your brand), a Deployer (using the system professionally), an Importer (placing a non-EU system on the EU market), or a Distributor (making the system available without modifications)?

Keep in mind that modifying a system or adding your brand can change your designation to Provider. For example, if you attach your name to a high-risk AI system, make significant changes not covered in the original assessment, or alter its intended purpose so it becomes high-risk, you’ll face stricter compliance requirements. These include conformity assessments, detailed technical documentation, and CE marking.

"The AI Act rewards proactive governance and penalises informal or undocumented modification."

- Iveta Bozhkova-Yuskeselieva, Legal Expert

If you’re a deployer using third-party high-risk tools, request the EU Declaration of Conformity from your vendors to verify compliance. Non-EU providers of high-risk systems must appoint an EU-based legal representative before entering the market. Clearly document your role for each system to ensure compliance and avoid penalties from misclassification.

Properly identifying, classifying, and assigning roles for your AI systems lays the groundwork for managing compliance with high-risk systems, which will be addressed in later steps.

Step 2: Meet Requirements for High-Risk AI Systems

Set Up Risk Management Processes

Establish a continuous risk management process that runs throughout the system's lifecycle. According to Article 9, you must identify, evaluate, and address risks related to health, safety, and fundamental rights. Think of risk assessment as a checkpoint in your machine learning development process - integrating compliance checks directly into your training pipelines. This ensures that every time your model is retrained, a review of risk controls is automatically triggered.

"The EU AI Act is product liability regulation, not policy. Enforcement targets the system, not just the company."

In December 2025, a European fintech company led by CEO Jinal Shah successfully aligned 12 AI systems with the EU AI Act. These systems were used for lending and fraud detection. They achieved this by implementing the "AI Governor" platform, which automated risk classification and technical documentation. Over a 10-week period, they completed documentation for three high-risk systems and set up automated logging for all AI decisions, avoiding potential fines of up to $16.2 million.

Maintain Data Quality and Governance Standards

Article 10 emphasizes the importance of using training, validation, and testing datasets that are relevant, representative, accurate, and complete. This requires documenting the origins and preprocessing steps of all training data, including data from third-party sources, to clearly outline the foundation of your model. Early data profiling helps identify and address biases in representation. Your Quality Management System (QMS) should integrate data governance with risk management and ongoing monitoring, as required under Article 17.

"Regulators do not operate on trust; they operate on evidence."

To meet these standards, you'll need to prepare "evidence packs" that include dataset summaries, audit logs, and records of bias mitigation for regulatory reviews. Failing to comply with Article 10 could result in fines of up to $16.2 million or 3% of global annual turnover, whichever is higher.

Add Human Oversight and Security Controls

Article 14 requires that human oversight be integrated into your system's design. This allows personnel to monitor operations, understand limitations, and intervene when necessary. Depending on your system's risk level, you can choose from oversight methods like human-in-the-loop, human-on-the-loop, human-in-command, or post-hoc review. It's essential to implement physical override controls that work in production environments, not just during testing. High-risk systems must also meet stringent standards for accuracy and cybersecurity to defend against threats like data poisoning or evasion attacks. Automated logging should capture all relevant reference, input, and output data. Additionally, train human supervisors to recognize automation bias and understand the technical limitations of the system.

Complete Conformity Assessments and Registration

Before launching your high-risk AI system, you must complete a conformity assessment as outlined in Article 43. While most high-risk systems qualify for internal control (self-assessment), applications involving biometrics, critical infrastructure, or law enforcement require a third-party assessment by an accredited notified body.

| Assessment Type | Applicability | Assessor | Requirements |

|---|---|---|---|

| Internal Control | Most high-risk AI systems | Provider (Self-assessment) | Technical documentation & QMS |

| Third-Party Assessment | Biometrics, critical infrastructure, law enforcement | Accredited "Notified Body" | Independent audit & certification |

After passing the assessment, you’ll need to affix the CE marking and register the system in the EU's public database as required by Article 49. Be aware that any significant changes to your model’s algorithm, training data, or intended purpose may require a new conformity assessment.

"A declaration of conformity unsupported by real assessment work is a liability, not a shield."

- AktAI

The Centre for European Policy Studies estimates that achieving compliance for a single high-risk AI product can cost an SME up to $432,000. This includes $208,680 to $356,400 for initial QMS setup and $77,112 in annual maintenance costs.

With these steps in place, the next focus should be on creating thorough documentation and transparency measures to solidify your compliance efforts.

Step 3: Create Documentation and Transparency Processes

Notify Users About AI Interactions

Under Article 50, it's mandatory to let users know when they're interacting with an AI system - especially if it’s not immediately obvious. This notification should happen right at the start of their interaction or before the AI makes any decision that affects them. For example, chatbots should include a constant "AI Assistant" label in the interface instead of relying on a one-time pop-up. Similarly, AI-generated content like images, audio, or video should include machine-readable markers, such as C2PA metadata, that remain intact even after standard edits.

For high-risk systems, the disclosure must go further. You need to provide detailed explanations of the system's capabilities, its limitations, accuracy levels, and how it makes decisions. If your AI uses emotion recognition, for instance, you should include a modal that explains the purpose of analyzing facial expressions before asking for camera access.

"The EU AI Act signals the end of 'unseen' AI. For engineering teams, the shift is from pure optimization to verifiable safety."

- Olena Tkachuk, UI Application Development Expert, Grid Dynamics

This level of transparency ties into earlier risk management strategies, ensuring accountability across the entire lifecycle of your AI systems.

Keep Required Technical Records

Notifying users is just the first step. To meet transparency requirements, you also need to maintain detailed technical records. Article 11 requires concise documentation of your system's architecture, design, training data, and performance metrics, which must be preserved for at least 10 years after the system is launched. For high-risk systems, Article 12 adds another layer: an automatic logging mechanism to track significant events throughout the system's lifecycle - essentially creating a "decision diary."

These logs should include key details like inputs, system state, context, model version, confidence scores, timestamps, and any overrides or reviews of AI outputs, along with the rationale behind those overrides. To protect privacy while maintaining traceability, you could use hashing methods (like SHA-256) for input data instead of storing raw personal information. A tiered storage system can help manage this data efficiently: real-time monitoring can rely on hot storage solutions like PostgreSQL or MongoDB for the first 30 days, while long-term records can be moved to cold storage options like WORM or archival systems for the required 7–10 years. Additionally, creating a MODEL_CARD.md file in your repositories can document the model's purpose, version history, limitations, and the lineage of its training data.

"The organizations that can produce clear, complete records fare far better than those scrambling to explain systems they never documented."

- Isabel Budenz, AI Compliance Counsel, Integrity Studio

Ensure Vendor Compliance

Your transparency efforts should extend to any third-party systems you use. Even when you rely on external AI products, you remain accountable for compliance. Article 47 requires you to verify that your vendors have completed a conformity assessment. This means requesting and retaining the legally binding "EU Declaration of Conformity" for a minimum of 10 years.

Vendor contracts should include specific compliance clauses to ensure access to technical documentation, establish incident reporting protocols, and require cooperation with regulatory bodies. When assessing vendors, request the technical documentation outlined in Annex IV, which includes risk management results and human oversight details. Keep in mind that significant changes to a vendor's system, such as updates to training data or architecture, might necessitate a new conformity assessment.

With over 50% of European organizations lacking an inventory of their AI systems, prioritizing thorough vendor verification is more important than ever.

Step 4: Plan Your Compliance Timeline

Actions to Complete Before August 2, 2026

The EU AI Act's high-risk AI obligations will be enforceable starting August 2, 2026. With your risk classification and documentation ready, it's time to map out a clear compliance plan. A suggested approach is a 90-day sprint: dedicate 30 days to inventory, 30 days to risk classification and gap analysis, and 30 days to operational readiness and documentation.

Key tasks include setting up a Quality Management System (QMS) as required under Article 17, completing conformity assessments, and registering your AI systems in the public EU database with CE marking before deployment. Additionally, ensure that mandatory AI literacy training is part of your preparation.

Compliance comes with a financial commitment. Large enterprises should budget between $8–15 million for initial compliance, while small and medium-sized enterprises (SMEs) may face costs ranging from $500,000 to $2 million. For SMEs, a single high-risk AI product could cost up to $432,000 to meet compliance standards. With only 18% of European employers feeling "very prepared" for the Act, early planning is essential.

"The EU AI Act's August 2026 deadline enforces high-risk AI rules... with penalties reaching €35 million or 7% of global revenue."

- AI2Work Editorial Team

To streamline compliance, consider appointing a board-level AI officer to oversee efforts across legal, IT, and data science teams. It's also important to review vendor contracts, ensuring that third-party providers deliver an EU declaration of conformity and clearly outline liability.

Ongoing Compliance After the Deadline

Compliance doesn’t stop once the August 2, 2026, deadline passes. Providers must continue monitoring the performance of high-risk AI systems post-deployment. Deployers are responsible for overseeing system operations and reporting any major issues or malfunctions to providers or national authorities immediately.

Human oversight remains a cornerstone of compliance. Assign trained personnel to intervene when necessary. Additionally, deployers are required to keep system-generated logs for at least six months to ensure traceability. Maintaining high-quality input data is equally critical to avoid algorithmic bias or performance issues.

Annual compliance costs are estimated at $2–5 million for large enterprises and $200,000–$500,000 for SMEs. Stay informed about updates like Codes of Practice and Implementing Acts from the EU AI Office, as these will guide technical standards.

Handle Existing Systems and Transition Periods

For AI systems already on the market before August 2, 2026, some may qualify for grandfathering provisions. These systems typically won’t need to comply retroactively unless significant changes are made to their design or purpose. To manage this, establish a tracking system to monitor updates.

Timelines vary depending on the type of AI. General-purpose AI (GPAI) models available before August 2, 2025, have until August 2, 2027, to comply fully. High-risk AI integrated into regulated products, such as medical devices or automotive systems, also has until August 2, 2027. Public sector organizations deploying high-risk AI systems have the most extended timeline, with a deadline of August 2, 2030.

| Deadline | Milestone | Affected Systems |

|---|---|---|

| August 2, 2025 | GPAI model obligations apply | General-Purpose AI |

| August 2, 2026 | Full high-risk requirements (Annex III) | Standalone High-Risk AI |

| August 2, 2027 | High-risk AI in regulated products | Medical devices, Automotive |

| August 2, 2027 | Existing GPAI models must comply | GPAI on market before Aug 2025 |

| August 2, 2030 | Public authority systems | Public sector High-Risk AI |

"The transition period offers time to prepare, not to wait."

There’s a possibility that the November 2025 Digital Omnibus proposal could extend high-risk deadlines to December 2027, but this isn’t guaranteed. To avoid risks, focus on meeting the current deadlines. These milestones are crucial for maintaining compliance with the EU AI Act over the long term.

EU AI Act compliance: Key deadlines and how to prepare

Conclusion

The EU AI Act is set to change how businesses approach artificial intelligence, making compliance not just an option but a requirement. Non-compliance could lead to penalties as high as $35 million or 7% of global revenue, with enforcement expected to be as strict as GDPR, which has already resulted in $7.7 billion in fines since 2018.

To navigate this shift, companies need to take four essential steps: inventory all AI systems, classify them by risk level, apply technical controls and documentation for high-risk systems, and adhere to the compliance timeline, with full requirements kicking in by August 2, 2026.

The urgency can't be overstated - most organizations are unprepared, and setting up a compliance program typically takes 4 to 6 months. Michele Cimmino from Lasting Dynamics highlights the importance of proactive planning:

"Compliance must be architectural. It must be designed into the system from the beginning... into the data pipelines, the model training processes, the inference engines, the logging systems, the user interfaces, and the deployment infrastructure".

Even after meeting the deadline, the work continues. Businesses must conduct ongoing post-market monitoring, report serious incidents within 15 days, and maintain detailed documentation. Demonstrating a good-faith effort toward compliance can also help mitigate enforcement risks.

Rather than viewing the EU AI Act as a regulatory burden, organizations that prioritize it strategically will be better positioned to secure market access and avoid steep penalties. Start now - build your inventory, classify your systems, and integrate compliance into your AI infrastructure to stay ahead.

FAQs

Does the EU AI Act apply to my U.S. company?

Yes, it does. The EU AI Act applies to U.S. companies if their AI systems are used by customers, employees, or businesses within the EU market. It doesn't matter where the company is headquartered - if your AI system affects individuals or organizations in the EU, compliance with the Act is mandatory.

How do I know if my AI use case is “high-risk”?

To figure out whether your AI use case qualifies as "high-risk", you'll need to see if it fits into the categories outlined in Annex III of the EU AI Act. These categories cover areas such as employment, education, essential services, law enforcement, justice systems, and critical infrastructure. Additionally, it could be considered high-risk if it serves as a safety component of a product governed by EU regulations, as detailed in Article 6.

What evidence should I keep to prove compliance?

To meet the requirements of the EU AI Act, it's important to keep detailed records of your AI system's activities. This includes decision-making processes, technical documentation, interaction logs, data inputs, and risk assessments. These records serve as key evidence to show that your system complies with the Act's guidelines.

Related Blog Posts

Get new content delivered straight to your inbox

The Response

Updates on the Reform platform, insights on optimizing conversion rates, and tips to craft forms that convert.

Drive real results with form optimizations

Tested across hundreds of experiments, our strategies deliver a 215% lift in qualified leads for B2B and SaaS companies.

.webp)