Behavioral Data Anonymization for GDPR Compliance

GDPR compliance for behavioral data isn't optional - it’s mandatory. If your business collects data like browsing habits, search queries, or click-through rates, you're handling personal information under GDPR. Failure to comply can lead to fines up to €20 million or 4% of global annual revenue. Here's the key takeaway: anonymized data is exempt from GDPR, while pseudonymized data is still regulated.

Key Points:

- Anonymization: Irreversible process; removes GDPR obligations.

- Pseudonymization: Reversible process; remains under GDPR.

- Risks: Behavioral data is highly re-identifiable (e.g., 87% of people can be identified with just three data points).

- Techniques: Use methods like generalization, k-anonymity, or differential privacy to protect data while retaining its utility.

Actionable Steps:

- Map your data: Identify sensitive identifiers like IP addresses and browser metadata.

- Choose the right method: Use anonymization for data sharing and pseudonymization for internal use.

- Test your methods: Regularly assess re-identification risks and document your processes.

- Maintain governance: Keep encryption keys separate and review methods quarterly.

Bottom line: Proper anonymization protects privacy, ensures compliance, and allows you to use data effectively for analytics and AI training.

Learn Data Anonymization Techniques for GDPR & HIPAA Compliance

sbb-itb-5f36581

GDPR Requirements for Behavioral Data

The GDPR sets out clear rules and principles for handling behavioral data, addressing the challenges businesses face in managing this type of information.

What Counts as Personal and Behavioral Data

According to GDPR Article 4, personal data includes any information that can directly or indirectly identify an individual. For behavioral tracking, this covers IP addresses, cookie identifiers, advertising IDs, device fingerprints, and browser metadata. Even seemingly harmless details - like browser type, operating system, screen resolution, and timezone - can combine to create a unique fingerprint, identifying an individual.

The European Commission emphasizes that data that has been de-identified, encrypted, or pseudonymized still qualifies as personal data if it can be linked back to an individual. For example, replacing a user’s email with a random ID doesn’t eliminate GDPR responsibilities if that ID can connect to behavioral patterns or other identifiers.

GDPR’s scope extends beyond EU-based businesses. Article 3(2)(b) states that any company monitoring the behavior of individuals in the EU, even if headquartered outside the EU (e.g., in the U.S.), must comply. Placing analytics or advertising cookies on devices used by EU visitors counts as monitoring.

Next, let’s explore the six principles that underpin lawful data processing under GDPR.

6 Principles of Lawful Data Processing

GDPR Article 5 outlines six principles for processing personal and behavioral data. These principles are not just guidelines - they are enforceable legal requirements.

| Principle | Application to Behavioral Data |

|---|---|

| Lawfulness, Fairness, Transparency | You must have a valid legal basis (like user consent for non-essential tracking) and clearly explain what data you’re collecting and why. |

| Purpose Limitation | Data collected for one purpose, such as website analytics, cannot be used for another, like targeted advertising, without obtaining new consent. |

| Data Minimization | Collect only the behavioral data needed for your stated purpose. Avoid gathering data "just in case" it might be useful later. |

| Accuracy | Ensure user profiles are accurate and provide ways for users to correct any errors. |

| Storage Limitation | Retain data only for as long as necessary. Keeping logs indefinitely violates this principle. |

| Integrity and Confidentiality | Use encryption, access controls, and secure storage to protect data from breaches or unauthorized access. |

For most behavioral tracking, consent under Article 6(1)(a) is required. To meet GDPR standards, consent must be freely given, specific, informed, and unambiguous - usually requiring a clear affirmative action.

Recent enforcement actions highlight the importance of adhering to these rules. In January 2022, the French regulator CNIL fined Google $150 million for making it harder to reject cookies than to accept them, violating the principle of free consent. Similarly, in December 2020, Amazon was fined $35 million for placing advertising cookies without prior consent.

While some businesses rely on "legitimate interest" under Article 6(1)(f) for basic analytics, this is only acceptable when tracking is anonymized and doesn’t follow users across sessions. In June 2023, Meta faced a $390 million fine from the Irish Data Protection Commission for improperly using "contractual necessity" instead of obtaining consent for behavioral advertising. The takeaway? If you’re building detailed user profiles or tracking users over time, explicit consent is non-negotiable.

Transparency is another key requirement under Articles 13 and 14. Businesses must provide clear privacy and cookie policies that explain what data is collected, why it’s collected, how long it’s retained, and who else has access to it. Users also have rights over their data, including the right to access it, request its deletion, object to marketing profiling, and avoid automated decision-making.

However, compliance comes with challenges. When companies implement GDPR-compliant consent banners, cookie opt-in rates among EU audiences can drop to as low as 30%, leaving a large portion of user behavior untracked.

Anonymization vs Pseudonymization

Anonymization vs Pseudonymization: GDPR Compliance Differences

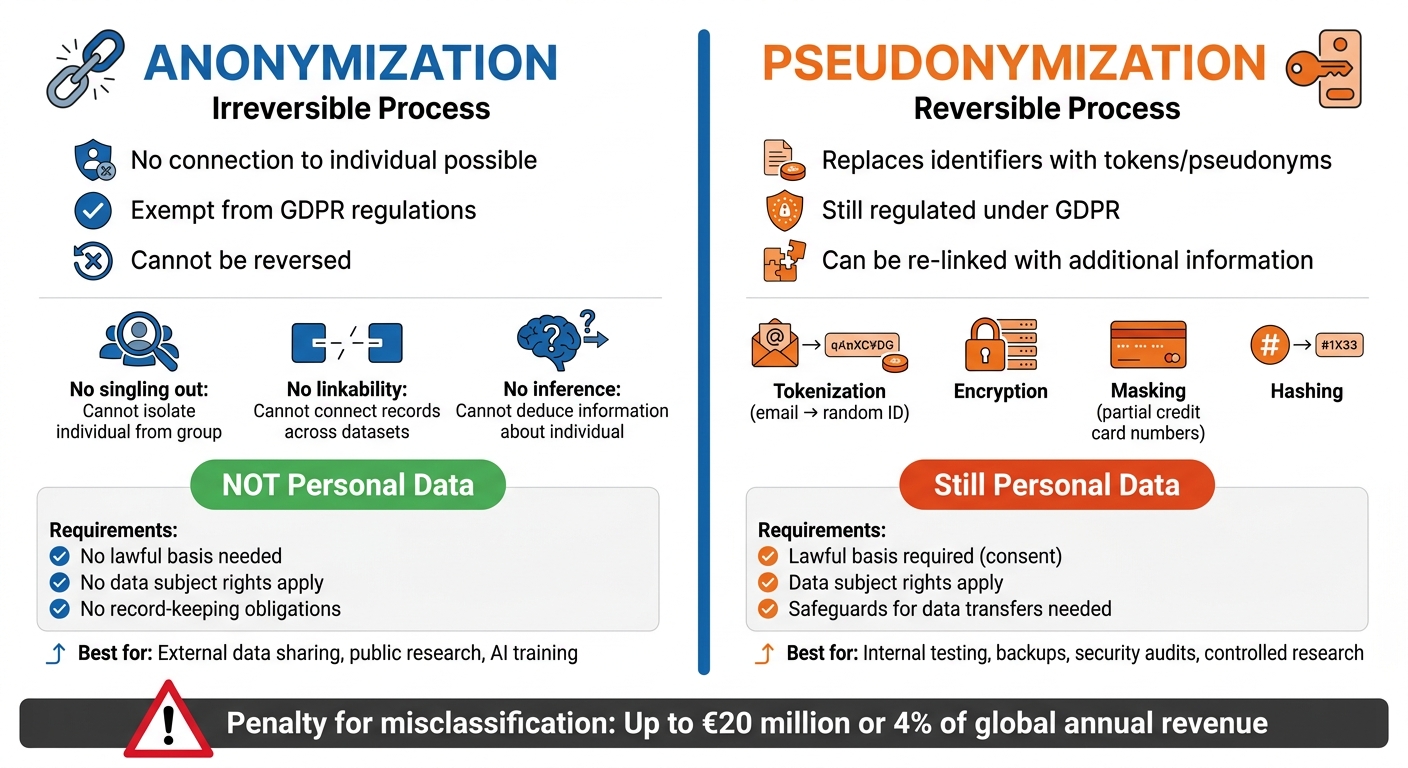

Understanding the difference between anonymization and pseudonymization is essential when dealing with GDPR requirements for behavioral data. While both techniques aim to reduce privacy risks, they come with distinct legal implications.

What Is Anonymization

Anonymization involves an irreversible process that completely severs any connection between the data and the individual it pertains to. Once data is fully anonymized, it no longer falls under GDPR regulations.

"The principles of data protection should therefore not apply to anonymous information, namely information which does not relate to an identified or identifiable natural person or to personal data rendered anonymous in such a manner that the data subject is not or no longer identifiable." - GDPR Recital 26

For data to qualify as anonymized, it must meet three strict criteria:

- No singling out: It should be impossible to isolate an individual from a group.

- No linkability: Records cannot be connected to the same person across different datasets.

- No inference: No information about an individual can be deduced from the remaining data.

Achieving true anonymization is especially difficult with behavioral data. Identifiers like IP addresses, browser fingerprints (e.g., screen resolution, operating system, installed fonts), and precise session timestamps can still make it possible to single out individuals.

What Is Pseudonymization

Pseudonymization, on the other hand, is a reversible process. It replaces direct identifiers with tokens or pseudonyms while keeping the ability to re-link the data to individuals through separate information, such as encryption keys or mapping tables.

Common pseudonymization methods include:

- Tokenization (e.g., replacing email addresses with random IDs)

- Encryption

- Masking (e.g., showing only partial credit card numbers)

- Hashing low-entropy data like names

The key difference is that pseudonymized data can still be linked back to individuals if someone has access to the additional information needed for re-identification. According to EDPB Guidelines 01/2025, any party within the "pseudonymisation domain" - those who hold or can derive the de-identification key - must treat the data as personal under GDPR.

"Pseudonymisation means the processing of personal data in such a manner that the personal data can no longer be attributed to a specific data subject without the use of additional information, provided that such additional information is kept separately." - GDPR Article 4(5)

How Each Affects GDPR Compliance

The choice between anonymization and pseudonymization has a direct impact on GDPR compliance.

-

Anonymized data is no longer considered personal data. This means:

- No lawful basis for processing is required.

- Data subject rights do not apply.

- Record-keeping obligations, like maintaining Records of Processing Activities, are not necessary.

-

Pseudonymized data, however, remains subject to GDPR. This includes:

- Requiring a lawful basis for processing, such as explicit consent.

- Honoring data subject rights.

- Implementing safeguards for data transfers to third parties.

The EDPB's 2025 Coordinated Enforcement Framework specifically targets cases where businesses use "inefficient anonymisation techniques" as a substitute for deletion. Misclassifying pseudonymized data as anonymized can result in penalties of up to €20 million or 4% of global annual revenue.

In practice:

- Use anonymization for external data sharing, public research, or training AI models when GDPR obligations need to be eliminated.

- Opt for pseudonymization in scenarios like internal testing, backups, security audits, or research where retaining data utility and the ability to re-contact individuals is necessary under controlled conditions.

Anonymization Techniques for Behavioral Data

When working with behavioral data, it's critical to choose techniques that reduce the risk of re-identifying individuals while still keeping the data useful for analysis and creating high-converting lead forms.

Generalization and Suppression

Generalization involves replacing specific values with broader categories. For example, instead of recording a user's exact age as 34, you might store it as "30–39." Similarly, detailed location data, like a full ZIP code (90210), can be shortened to a partial ZIP (902**) or a larger geographic region.

Suppression, or redaction, removes identifying attributes entirely. For instance, you could delete IP addresses or replace precise timestamps with just the date.

The tricky part with behavioral data is striking a balance: remove too much detail, and the data loses its value; remove too little, and you risk exposing individuals. Even seemingly harmless combinations, like browser fingerprint, screen resolution, and timezone, can uniquely identify someone.

To further bolster anonymity, consider applying k-anonymity.

k-Anonymity

With k-anonymity, each record in the dataset is made indistinguishable from at least k–1 other records based on specific quasi-identifiers, such as age range, gender, or location. For instance, if k = 5, each individual is hidden within a group of at least five people.

This method complements generalization and suppression by ensuring a minimum group size for anonymity. The concept gained attention when Latanya Sweeney famously re-identified Massachusetts Governor William Weld's medical records in the 1990s. She matched ZIP codes, birth dates, and gender from voter rolls to medical data, exposing vulnerabilities in anonymization.

However, k-anonymity isn't foolproof. Researchers demonstrated its limitations in a high-profile case involving movie ratings, which eventually led to legal action and the cancellation of future challenges. While k = 5 is a common starting point, sensitive data - like healthcare records - often requires much higher k values, such as 10 or even 100, to ensure adequate protection.

Differential Privacy

For a more advanced approach, differential privacy uses statistical noise to protect individual data. This method ensures that the result of a query remains nearly the same whether or not a specific individual's data is included. It provides a mathematical safeguard against re-identification, even if an attacker has access to external information.

Differential privacy relies on an epsilon (ε) parameter to manage the tradeoff between privacy and accuracy. A smaller epsilon (e.g., 0.1) offers stronger privacy but less accurate results, while a larger epsilon (e.g., 10) allows for more precise data but weaker privacy. The U.S. Census Bureau applied this technique to safeguard data in the 2020 census.

"Differential privacy is the most advanced formal anonymization model... It defines a mathematical guarantee: the result of a query to a database should not significantly differ regardless of whether a specific individual's data is in the dataset or not."

– nFlo

Major players like Google and Apple have adopted differential privacy on a large scale to gather behavioral data while protecting individual identities. Businesses managing large datasets can use tools such as the Google Differential Privacy Library or the ARX Data Anonymization Tool to implement this method. Just keep an eye on your "privacy budget", as each query consumes epsilon, limiting the number of queries you can run on the same dataset.

How to Implement Anonymization Processes

This section outlines practical steps to integrate anonymization and pseudonymization into your workflows. By identifying sensitive data, validating methods, and setting up compliance controls, you can align your processes with GDPR requirements while maintaining data utility.

Finding Sensitive Identifiers in Behavioral Data

Start with data mapping to document where behavioral data is collected, stored, and who has access to it. This foundational step ensures you know exactly what you're working with.

Next, classify your data by sensitivity. Standard personal data includes names and email addresses, while sensitive categories encompass biometric and health information. Special identifiers, such as IP addresses, MAC addresses, cookie IDs, advertising IDs, and browser fingerprints (like operating system details or screen resolution), also require attention.

Pay close attention to quasi-identifiers - data points that may seem harmless on their own but can uniquely identify individuals when combined. This "mosaic effect" highlights the need to evaluate how data points interact.

When assessing risk, focus on three threats:

- Singling out: Isolating a specific record.

- Linkability: Connecting records across datasets.

- Inference: Deducing new information from existing data.

To test these risks, conduct a "motivated intruder" test. This involves simulating whether someone with public records or social media access could re-identify individuals in your dataset. For larger organizations, automated PII detection tools powered by Natural Language Processing can scan for more than 320 entity types, streamlining the process.

Testing and Risk Assessment

Testing ensures your anonymization measures are effective. The UK's Information Commissioner's Office explains:

"Anonymisation is about reducing the likelihood of a person being identified or identifiable to a sufficiently remote level."

Regularly run the motivated intruder test to confirm that individuals cannot be re-identified using available resources. Assess your data against the risks of singling out, linkability, and inference. Tools like Anonymeter can help quantify these risks. For high-risk data, aim for a re-identification risk score below 0.05%. If you're using k-anonymity, aim for k≥5 for standard data and k≥10 for sensitive data.

You also need to balance privacy with data usefulness. Try to keep the Information Loss Ratio below 20% to ensure anonymized data remains valuable for analysis. Document all processes in a DPIA, including methods, identifiers, and your rationale for irreversibility.

Keep in mind that anonymization isn't permanent. Advances in computing and new public datasets can make previously anonymous data identifiable again. Review your anonymization methods quarterly and conduct an annual re-identification risk assessment.

Governance and Key Management

Once your anonymization measures are validated, establish strong governance and key management practices to maintain data security.

For pseudonymized data, keep the mapping table or key completely separate from the dataset. Apply distinct technical and organizational protections to meet GDPR requirements. In complex systems, using a Central Token Vault can ensure consistent pseudonymization across platforms like CRM, billing, and analytics tools.

Under GDPR Article 30, maintain Records of Processing Activities. These should detail your anonymization methods, the basis for irreversibility, and the legal grounds for processing. Log every anonymization operation, including detected data, applied methods, timestamps, and authorizations.

Set a 90-day implementation roadmap:

- First 30 days: Data discovery.

- Next 30 days: Pilot validation.

- Final 30 days: Operationalization with clear runbooks and monitoring.

Define anonymization rules within a policy engine and apply them consistently across all data pipelines to minimize errors. Use strong encryption standards like AES-256 for symmetric encryption and Argon2id for key derivation. If handling highly sensitive behavioral data, consider zero-knowledge infrastructure with EU-based hosting to avoid exposure under the US Cloud Act.

The European Data Protection Board has noted that "inefficient anonymisation techniques used as an alternative to deletion" are a common compliance issue. Regular testing, thorough documentation, and strong governance can make anonymization a powerful privacy safeguard rather than just a regulatory checkbox.

Conclusion

This guide has outlined key strategies for ensuring GDPR compliance through behavioral data anonymization. The distinction between true anonymization and pseudonymization is crucial - only true anonymization removes data from GDPR's scope, while pseudonymized data remains regulated.

Anonymization isn't as simple as stripping away names or email addresses. In fact, studies show that 87% of Americans can be uniquely identified using just a combination of zip code, birth date, and gender. To comply, your methods must address risks like singling out, linking, and inference.

The European Data Protection Board has flagged anonymization compliance as a priority for 2025 enforcement efforts. With GDPR penalties reaching up to $20 million or 4% of global annual revenue, adopting rigorous anonymization practices is not optional - it’s essential.

Start by mapping data flows in detail, identifying quasi-identifiers such as IP addresses, browser fingerprints, and location data. Select anonymization techniques suited to your needs: differential privacy for analytics, k-anonymity for shared datasets, or permanent redaction for public data. Document your approach thoroughly in your Data Protection Impact Assessment, including the parameters used and evidence supporting the irreversibility of your methods.

Anonymization isn’t a one-and-done process. As experts Andrew Burt, Sophie Stalla-Bourdillon, and Alfred Rossi observed:

"One of [GDPR's] most important provisions is unclear... it's unclear anyone really knows what 'anonymization' means in practice".

To stay ahead, schedule quarterly reviews of your anonymization techniques and conduct annual re-identification risk assessments. This ensures that your methods remain effective as technology evolves and new datasets emerge. Regular evaluations and robust data mapping not only support compliance but also create opportunities for secure and ethical data sharing while safeguarding privacy.

FAQs

How can I prove my behavioral data is truly anonymized under GDPR?

To comply with GDPR, proving that your behavioral data is truly anonymized means demonstrating that it cannot reasonably be used to identify an individual by any likely method. This requires making the data completely irreversible, ensuring that no one can trace it back to the original person. Achieving this often involves applying techniques that prevent re-identification while meeting GDPR's rigorous standards for anonymity.

What’s the safest way to anonymize IP addresses, cookies, and device fingerprints?

To protect individuals' privacy, the best strategy is to mask or modify data so it can't be traced back to a person. For instance, you can use 2-byte masking for IP addresses. This keeps regional information intact while stripping away anything that could identify someone personally. On top of that, it’s crucial to implement anonymization methods that align with GDPR regulations. These techniques should ensure that personal data is secure and cannot be re-identified.

When should I use anonymization vs pseudonymization for analytics and AI?

When it comes to protecting personal data, two key approaches are often discussed: anonymization and pseudonymization.

Anonymization involves completely removing any identifying details, making it impossible to trace the data back to an individual. Once data is anonymized, it falls outside the scope of GDPR because there's no reasonable way to re-identify the individuals. This method ensures total privacy but requires rigorous measures to guarantee that re-identification isn’t feasible.

On the other hand, pseudonymization replaces personal identifiers with codes or other substitutes. While this enhances privacy, the data can still be linked back to individuals if the additional information (like a key or mapping file) is accessible. Because of this, pseudonymized data remains under GDPR regulations. It strikes a balance between maintaining privacy and preserving the data’s usability for analysis or other purposes, but it demands strict compliance with GDPR rules.

Related Blog Posts

Get new content delivered straight to your inbox

The Response

Updates on the Reform platform, insights on optimizing conversion rates, and tips to craft forms that convert.

Drive real results with form optimizations

Tested across hundreds of experiments, our strategies deliver a 215% lift in qualified leads for B2B and SaaS companies.

.webp)